RFC-192

by Darius Kazemi, July 11 2019

In 2019 I'm reading one RFC a day in chronological order starting from the very first one. More on this project here. There is a table of contents for all my RFC posts.

Graphics: the big picture

RFC-192 is titled “Some Factors which a Network Graphics Protocol must Consider”. It's authored by Richard Watson of SRI-ARC and dated July 12, 1971.

The technical content

This is a philosophical RFC that is a response to the (many, many, too many in my opinion) RFCs that have been published to date on network graphics protocols. The author believes that the published protocols to date are not complete enough to cover all use cases for interactive graphics.

The author argues for the utility of a generic graphics terminal, similar to the virtual terminal concept in the latest NETRJS RFC. He estimates that about 75% of use cases for remote graphics timesharing use simple graphics terminals that display graphics but can't animate (storage tubes, more on this later), about 20% would require displays that refresh quickly, and maybe 5% require maximum cutting-edge graphical power. The author is concerned with the 95% of cases rather than the 5%.

He lists a bunch of requirements for the systems design of a network graphics protocol. Most of them are pretty straightforward and have been addressed by previous proposals, but the one that stands out to me is:

3) The software support should allow technicians, engineers, scientist, and business analysts as well as professional programmers to easily create applications using a graphic terminal.

He goes on to address this exact issue in the next section:

If one wants to create as system which is easy to use by inexperienced programmers and ultimately non-programmers, one needs to provide powerful problem-oriented aids to program writing. One has to start with the primitive machine language used to command the graphics system hardware and build upward. The philosophy of design chosen is the one becoming more common in the computer industry, which is to design increasingly more powerful levels of programming support, each of which interfaces to its surrounding levels and builds on the lower levels. It is important to try to design these levels more or less at the same time so that the experience with each will feed back on the designs of the others before they are frozen and difficult to change.

He sketches out five “levels” that need to be considered when designing for non-programmers.

- System calls

- A given programming language's interface to the system calls

- The actual picture drawing/editing/storage system

- Data structures for picture data

- The end-user application itself

He then looks at popular display hardware in 1971, which he breaks into two categories: storage tube displays, and refreshed displays.

A refreshed display is what all displays are today: something that updates the whole screen N times per second, and thus lets you change what is on the screen at a decent frame rate, displaying fluid animations. These cost anywhere from $10,000 to “several hundred thousand dollars” in 1971.

A storage tube display is meant to show static images. The graphics on the screen can be redrawn but very, very slowly. I've seen some of these in action at the VintageTEK museum and a complicated picture of, say, a circuit board diagram, can take something like 20 seconds to draw, though the resulting picture is beautiful, crisp, and incredibly high-resolution. (Imagine a robot very quickly and efficiently using an etch-a-sketch and you'll have the right idea.) These displays are much cheaper than refreshed displays, about $20,000 at the high end, many significantly cheaper than that.

He also gives an overview of various input devices, which we covered in RFC-178.

His requirements for user software are really interesting and are worth reading in full, but the gist is:

- pictures should be represented by graphical primitives and these primitives should be able to be grouped into logical picture parts

- picture parts should be selectable, and picture parts should be able to have metadata associated with them (so click on the window of a house in a CAD type drawing and there might be data about what model of window that is)

"user should be able to do some graphic programming by drawing directly at the console"

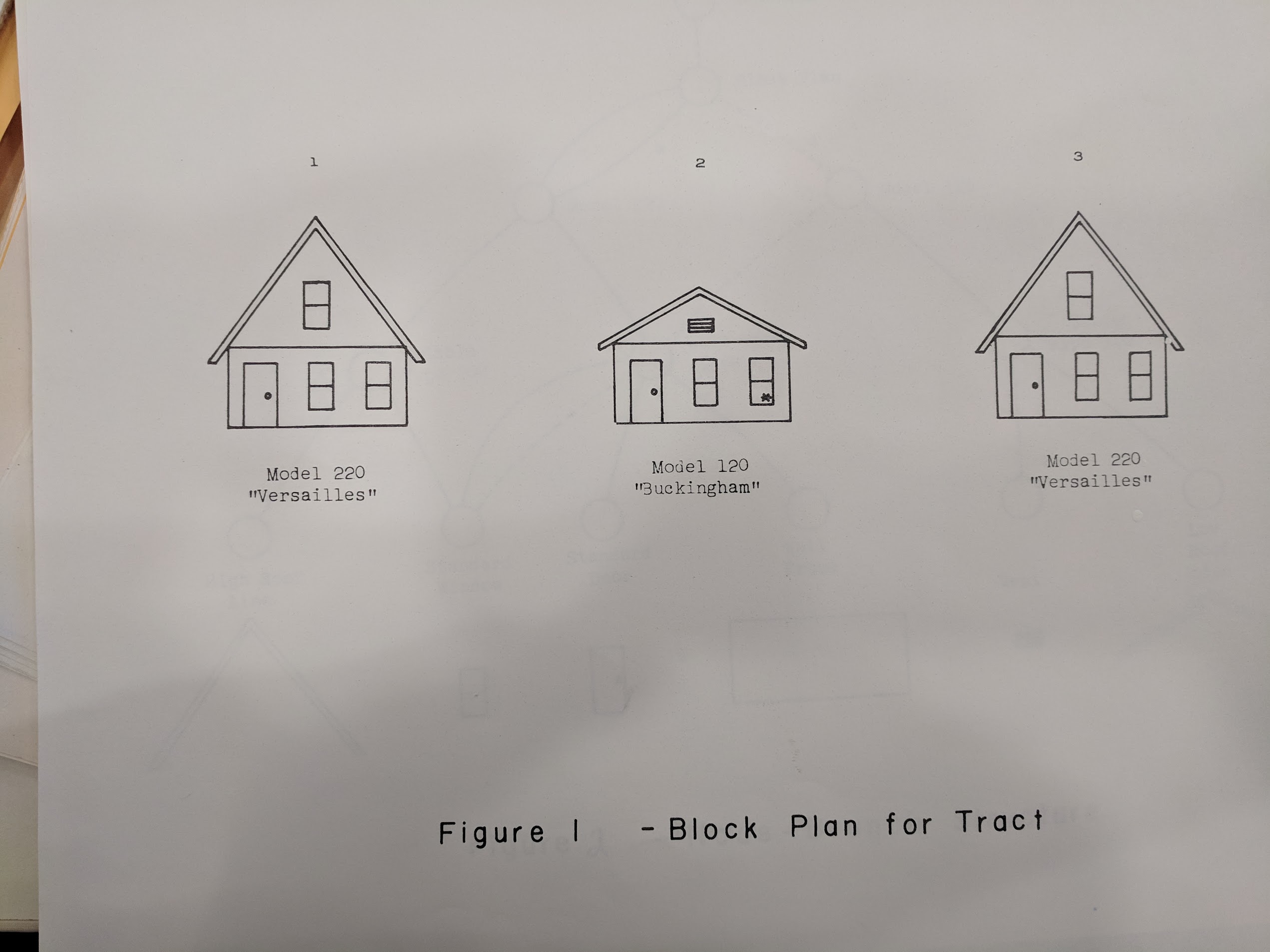

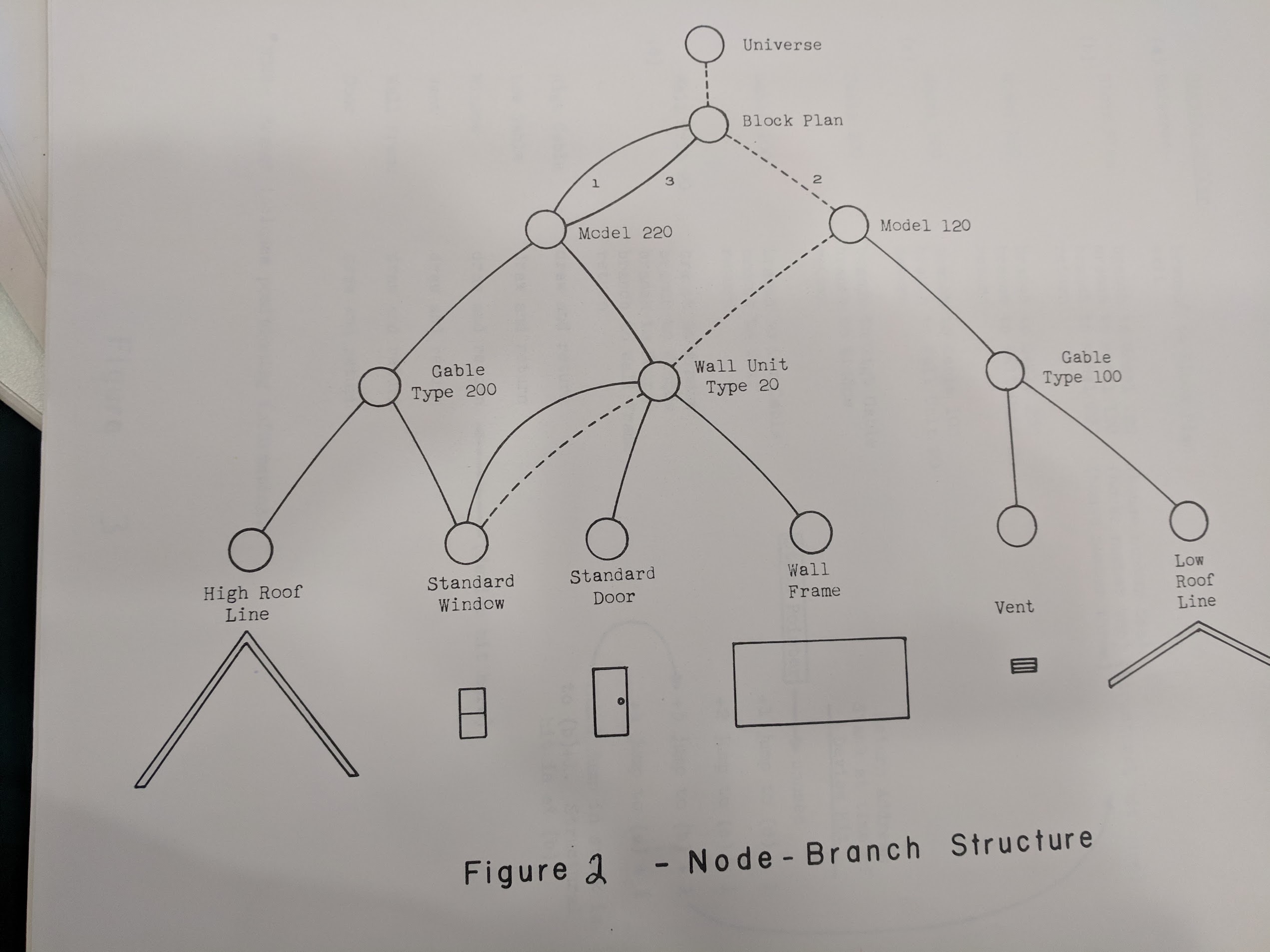

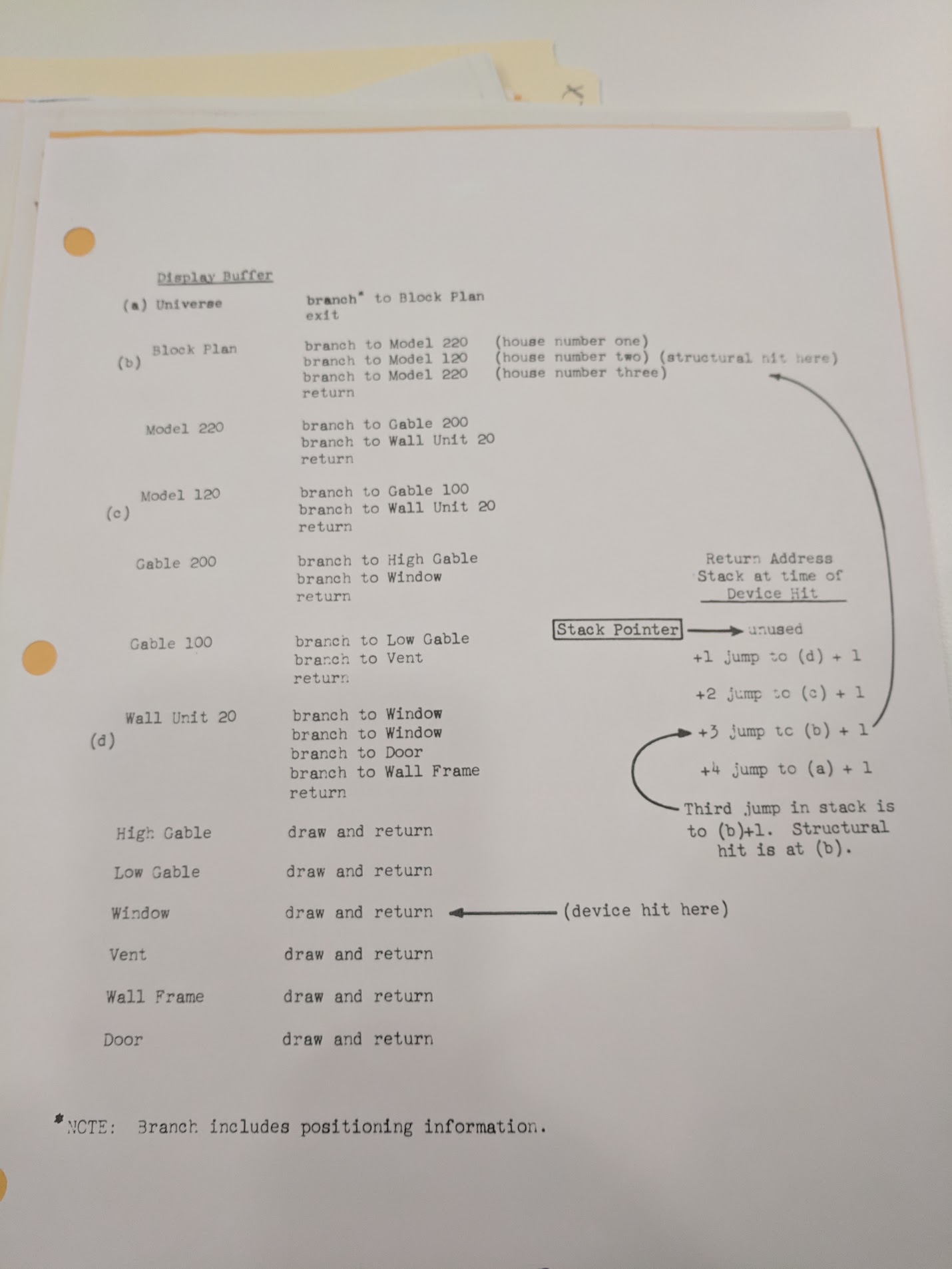

Then he describes data structures. "A line is much more than just a line when it represents a boundary or a part of some more complex unit". He talks about how a data structure for an entire image could contain useful information about parent-child relationships, and important metadata for each individual picture part. He seems to be describing a scene graph (see below for some missing figures). One case that he is concerned with is: if you click on a piece of a house, are you clicking on the whole house, or just the piece? How do you determine user intent?

There is a lot of good stuff in this RFC, and I'd recommend that anyone with an interest in computer graphics give it a read. There's a whole section about “light buttons”, namely, rectangles that have labels on them that when pressed with a light pen, issue a command related to the text on the rectangle. There's also a very in-depth discussion of what a back button (or “back pointer” in this case) actually is and does.

Analysis

There are two figures reference in the official version of this RFC, but the figures are not included anywhere online. I found them in the original documents at the Computer History Museum's archive, so here are my low-quality photos.

The scene graph stuff (though he doesn't use that term) seems to be pretty cutting edge for the time! I'm trying to dig up prior art on the concept of scene graphs as I'm curious as to exactly how cutting edge this is.

There is also a figure 3 that is pseudocode for parsing the scene graph that is entirely missing from the canonical RFC as well:

How to follow this blog

You can subscribe to this blog's RSS feed or if you're on a federated ActivityPub social network like Mastodon or Pleroma you can search for the user “@365-rfcs@write.as” and follow it there.

About me

I'm Darius Kazemi. I'm an independent technologist and artist. I do a lot of work on the decentralized web with ActivityPub, including a Node.js reference implementation, an RSS-to-ActivityPub converter, and a fork of Mastodon, called Hometown. You can support my work via my Patreon.