A Feminist Approach to Ethical Principles in Artificial Intelligence

By Paz Peña O.

Versión en castellano

This presentation was delivered at the Women in AI Ethics (WAIE) Annual Event 2020. The original name was “Artificial Intelligence in Latin America: A Feminist Approach to Ethical Principles”

I have a few minutes to intervene. My feeble ability to improvise in Spanish, which is accentuated in English, led me to write down my intervention about the aspects that I want to discuss here, in this short presentation, without wasting much time.

As an independent researcher, I am associated with Coding Rights, a Brazilian NGO working in the intersection on technology and feminism, to do joint research on critical feminist perspectives from Latin America on Artificial Intelligence.

Among its objectives, we're working on mapping the use of algorithmic decision-making tools by States in Latin America to determine the distribution of goods and services, including education, public health services, policing, and housing, among others.

There are at least three common characteristics in these systems used in Latin America that are especially problematic given their potential to increase social injustice in the region.

Examining these characteristics also allows us to think critically about the hegemonic perspectives on ethics that today dominate the Artificial Intelligence conversation.

One characteristic is the identity forced onto poor individuals and populations. The majority of these systems are designed to control poor populations. Cathy O'Neil (2016, Weapons of Math Destruction: How Big Data Increases Inequality and Threatens Democracy) said it before analyzing the usages of AI in the United States, as these systems “tend to punish the poor.” She explains:

This is, in part, because they are engineered to evaluate large numbers of people. They specialize in bulk, and they're cheap. That's part of their appeal. The wealthy, by contrast, often benefit from personal input. [...] The privileged, we'll see time and again, are processed more by people, the masses by machines.

This quantification of the self, of bodies (understood as socially constructed) and communities, has no room for re-negotiation. In other words, datafication replaces “social identity” with “system identity” (Arora, P., 2016, The Bottom of the Data Pyramid: Big Data and the Global South).

The second characteristic of these systems that reinforces social injustice is the lack of transparency and accountability.

None of them have been developed through a participative process of any type, whether including specialists or, even more important, affected communities. Instead, AI systems seem to reinforce top-down public policies from governments that make people “beneficiaries” or “consumers”.

Finally, these systems are developed in what we would call “neoliberal consortiums,” where governments develop or purchase AI systems developed by the private sector or universities. This deserves further investigation, as neoliberal values as the extractivist logic of production seem to pervade the way AI systems are designed in this part of the world, not only by companies but also by universities funded by public funds dedicated to “innovation” and improving trade.

These three characteristics of AI systems deepen structural problems of injustice and inequity in our societies. The question is why, for some years now, do we believe that the set of ethical principles “will fix” the structural problems of our society that are reflected by AI?

From a feminist and decolonial perspective, based on Latin America's experience, we are concerned in at least three aspects of the ethics debate in Artificial Intelligence:

In a neoliberal context of technology development, it is worrying that ethical principles are promoted as a form of self-regulation, displacing the human rights frameworks that are ethical principles accepted by many countries in the world and which are enforceable by law. In a world where the enforcement and respect of human rights are becoming increasingly difficult, this is simply unacceptable.

The ethical principles discussion, which is basically a situated practice in a cultural context, is dominated by the Global North. I always tell this anecdote: in 2018, at a famous international conference, one of the panelists – a white male – said that ethical principles in AI could be based on universally recognized documents such as the Constitution of the United States of America (!). You have to review the 74 sets of ethical principles that were published between 2016-2019 to see, then, which voices dominate and, more importantly, which voices are silenced.

Much of the discussion on ethical principles take for granted that all use of Artificial Intelligence is good and desirable. The exacerbation of social injustice that they can cause is only a bug that could be solved by the correct use of a set of ethical principles designed in the Global North.

Ethical principles are fundamental to any human practice with our environment. But an ethical principle that ends up replicating oppressive relationships is simply a whitewashing of toxic social practices.

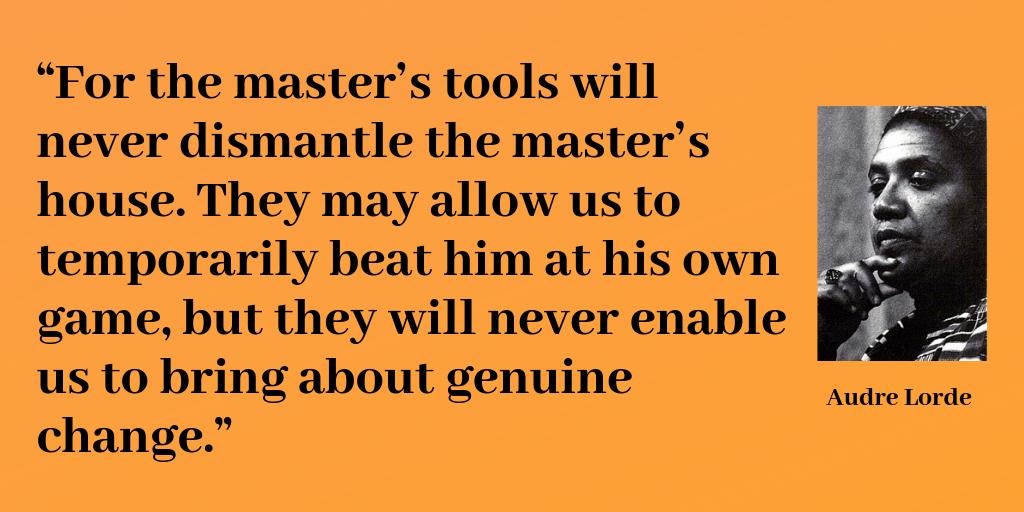

The framework of critical feminism serves greatly to understand this. As Audre Lorde said at a conference in 1979, “the master's tools will never dismantle the master's house.”

So how do we continue?

We must work towards a feminist and emancipatory ethics for technology. In other words, I suggest at least basing our actions on three pillars:

Reject the illusion, installed mainly in Silicon Valley since the 1990s, that technological innovation is always an improvement regardless of its social consequences. In other words, we must dismantle that patriarchal and hegemonic idea of ”Unless you are breaking stuff, you aren't moving fast enough,” as Mark Zuckerberg proclaims as the world is breaking apart.

We must understand that, in many cases, Artificial Intelligence improves a dangerous state of capitalism in which – using Isabelle Stengers' words –, there is a systematic expropriation of what makes us capable of thinking together about the problems that concern us. In other words, we must refuse to accept the extermination of spaces for social deliberation because of decision automation. Let's claim our right to think about the world together. Let's claim our right to be political beings.

Perhaps the most urgent thing is to deconstruct the ideology that assumes that Artificial Intelligence can solve any complex social problem and, as an imperative consequence, to challenge that a person's destiny could be defined exclusively by automated binary decisions based on a set of personal data from the past.

That goes against the very essence of human dignity and, above all, against any idea of social justice when we all know here, that the current social systems will end up classifying and defining the destiny only of the less privileged people.

Women of the world: we don't need just to fix software, we must challenge our societies' inequal structures to only then have a fair Artificial Intelligence.

Thanks,